Do Infants Smile Like Adults? Modelling the Development of Emotional Expressions Using Deep Learning

A comparative deep learning study investigating how facial emotional expressions evolve from infancy to adulthood. Two ResNet-18 models — one trained on infant faces, one on adult faces — are evaluated across five developmental age groups to identify when childhood expressions begin to resemble adult-like patterns.

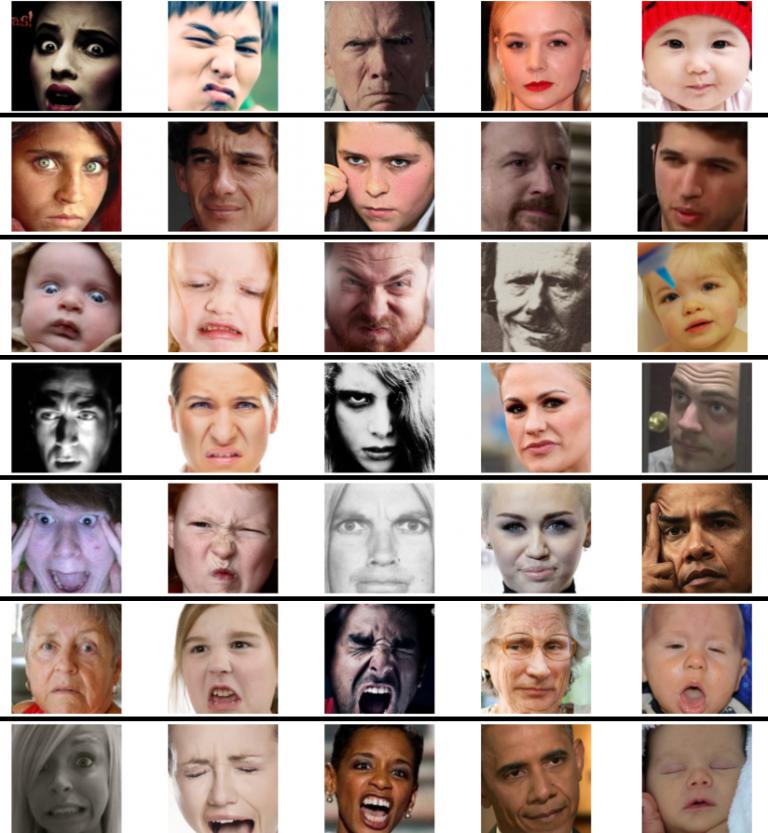

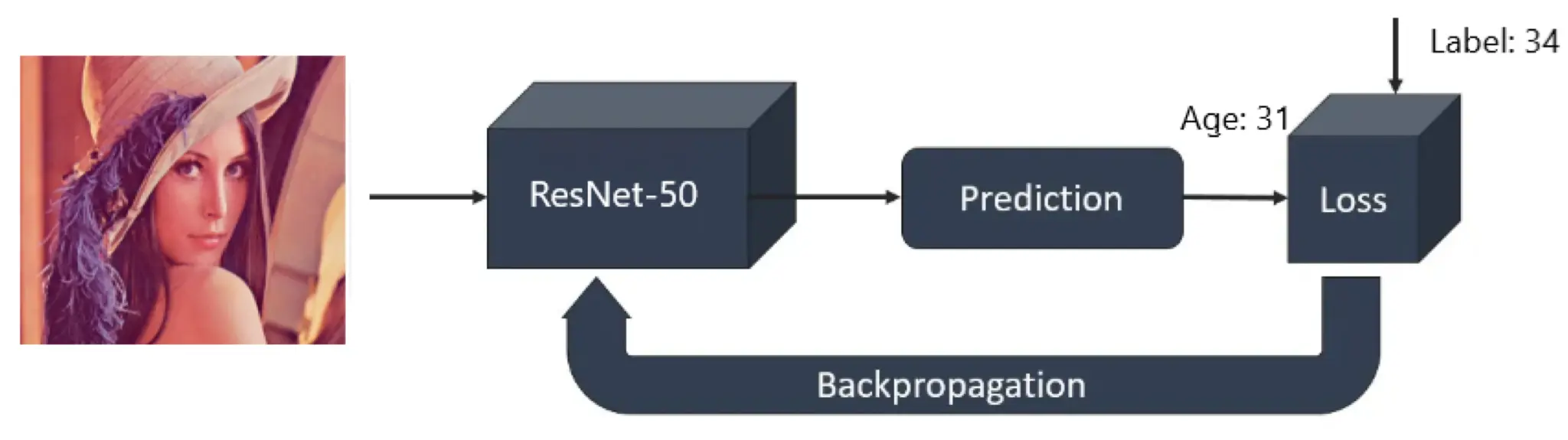

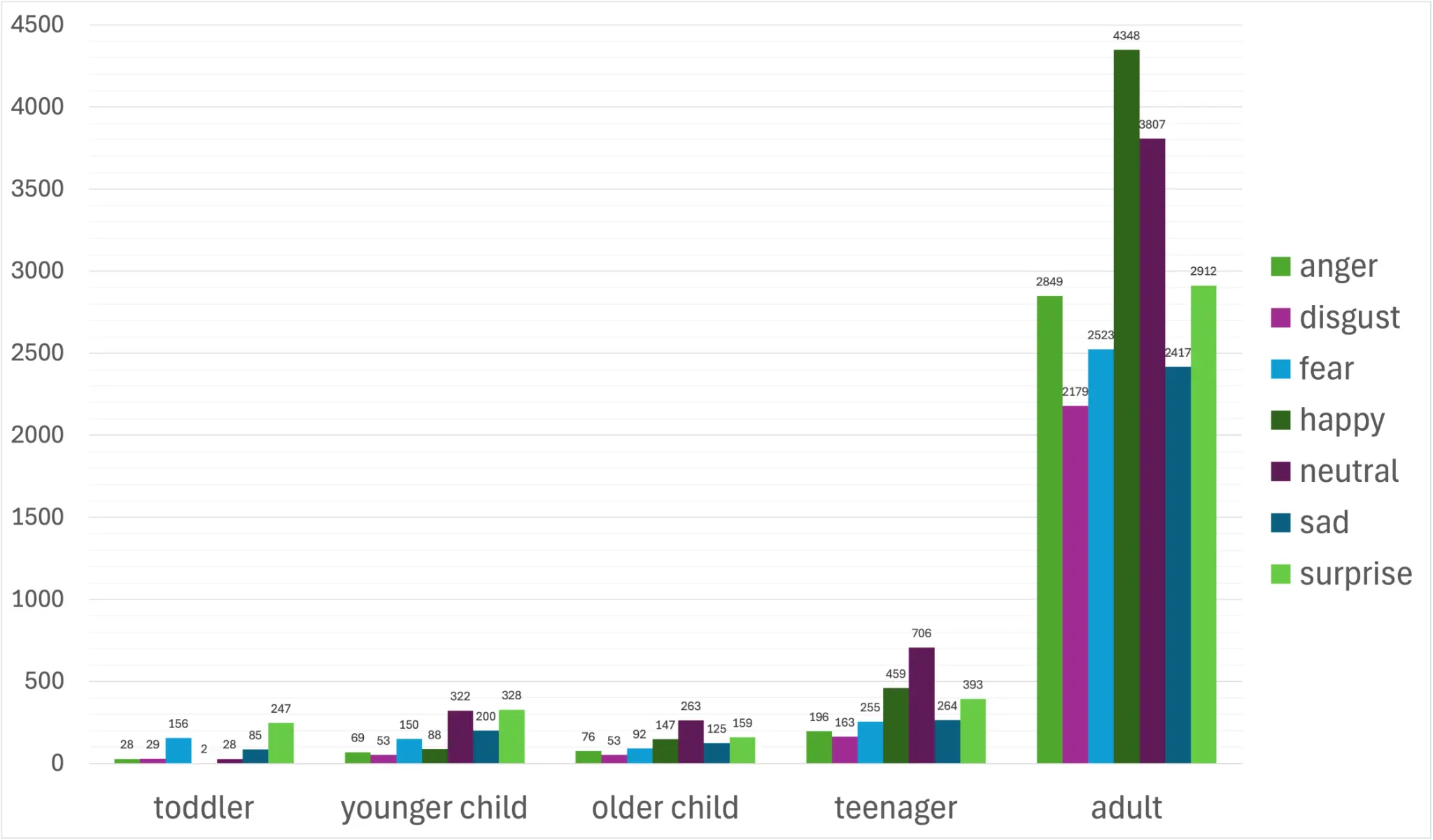

Understanding the developmental transition from infant to adult facial emotional expression is crucial for both neurodevelopmental psychology and practical caregiving. This study trains two separate deep neural networks on infant and adult facial expression datasets respectively. Using the Tromsø Infant Database (TIF) and AffectNet, the data is preprocessed and segmented into distinct age groups via a ResNet-50 age estimator. Two modified ResNet-18 architectures incorporating a combined distance loss enhance discriminative feature learning. Experiments reveal that the infant model's accuracy declines linearly with test subject age, suggesting that distinct infant expression features diminish over time. Conversely, the adult model performs best on older children and teenagers, indicating a nonlinear trajectory toward adult-like expression.

"At what developmental stage does a child's facial emotional expression become recognisably adult-like — and can deep learning models trained on infant or adult faces capture this transition?"

Two Complementary Datasets

Key Technical Contributions

Expand each card to explore the methodology, design rationale, and trade-offs behind each contribution.

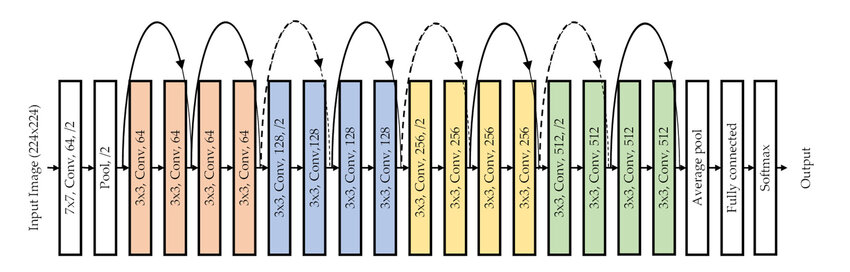

Modified ResNet-18 Backbone

Both models share the same ResNet-18 foundation, initialised with ImageNet pretrained weights. The final fully-connected layer is replaced with a custom classification head: a dropout layer followed by a linear layer outputting predictions for seven emotion classes.

| PARAMETER | INFANT MODEL | ADULT MODEL |

|---|---|---|

| Backbone | ResNet-18 | ResNet-18 |

| Layer freezing | None (all trainable) | Layer 1 frozen |

| Dropout | 0.5 | 0.7 |

| Initial LR | 0.0005 | 1e-5 |

| Weight decay | 0.0005 | 2e-3 |

| Label smoothing | — | α = 0.05 |

| Distance loss λ | 0.0035 | tuned separately |

| Early stopping | Epoch 28 | Patience 8 |

| Optimiser | Adam | Adam |

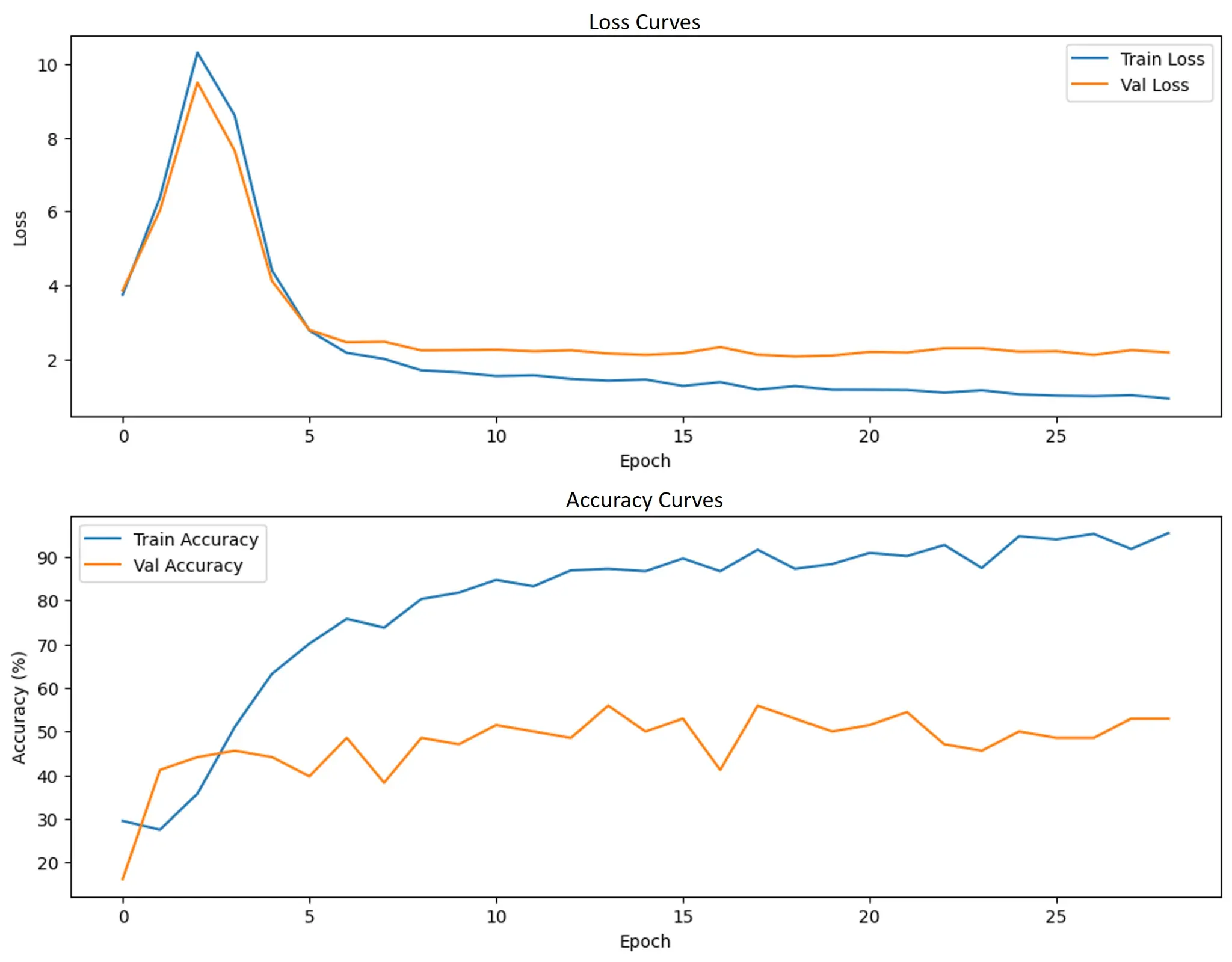

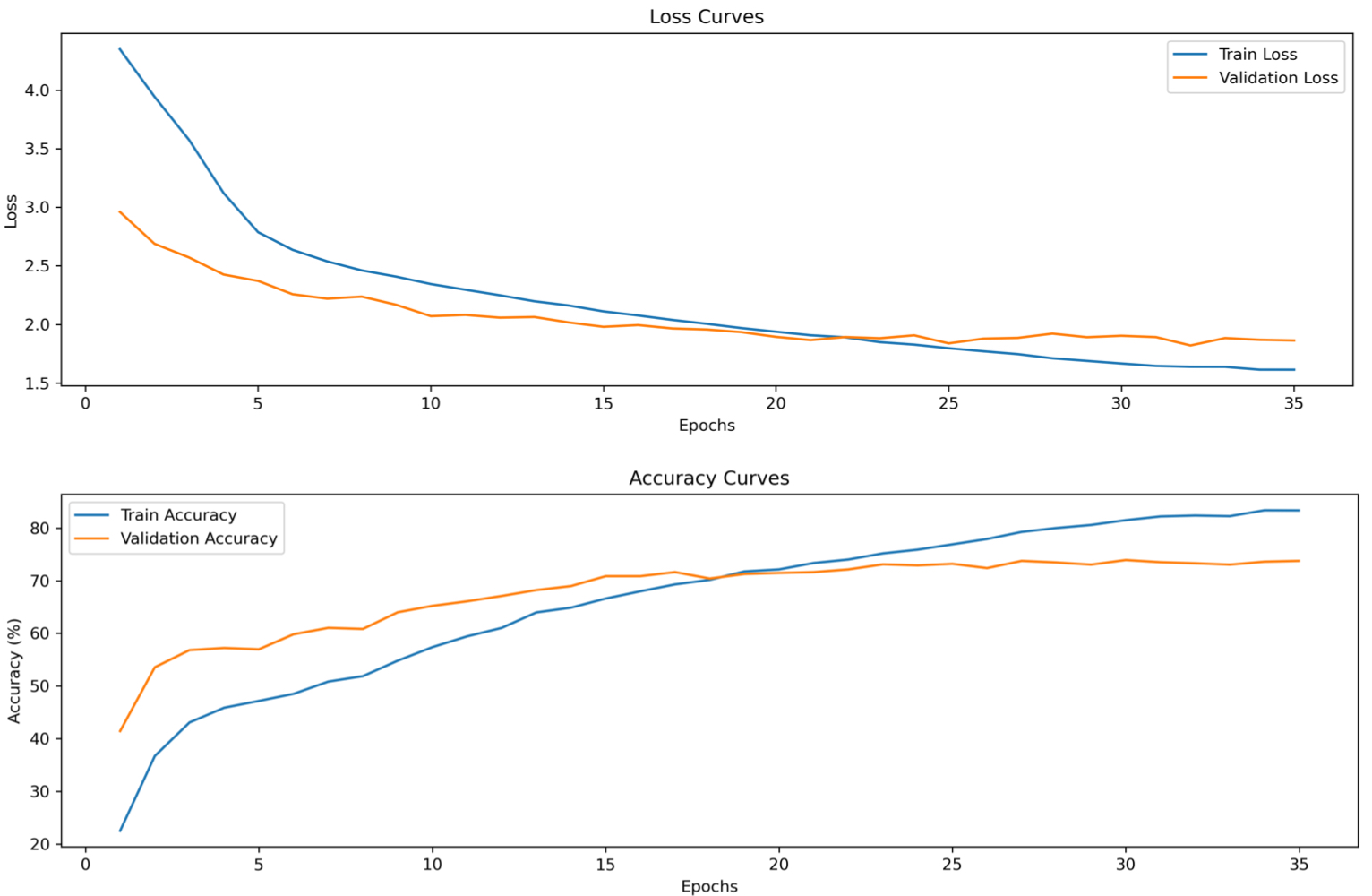

Infant & Adult Model Training Curves

Cross-Age Generalisation

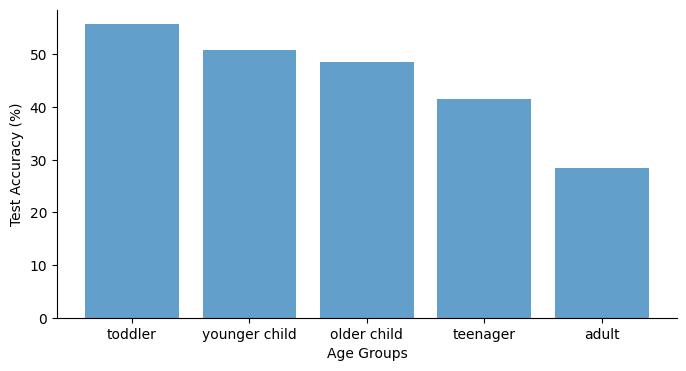

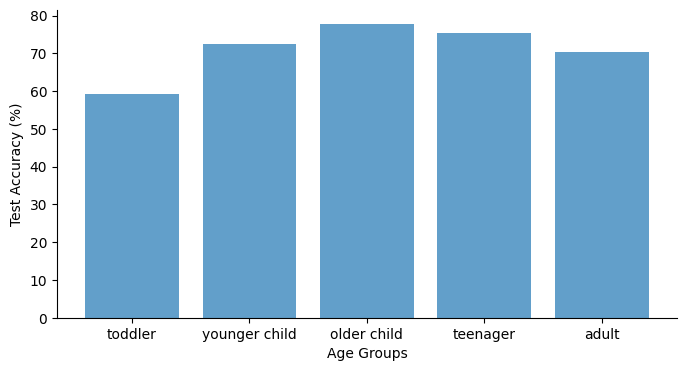

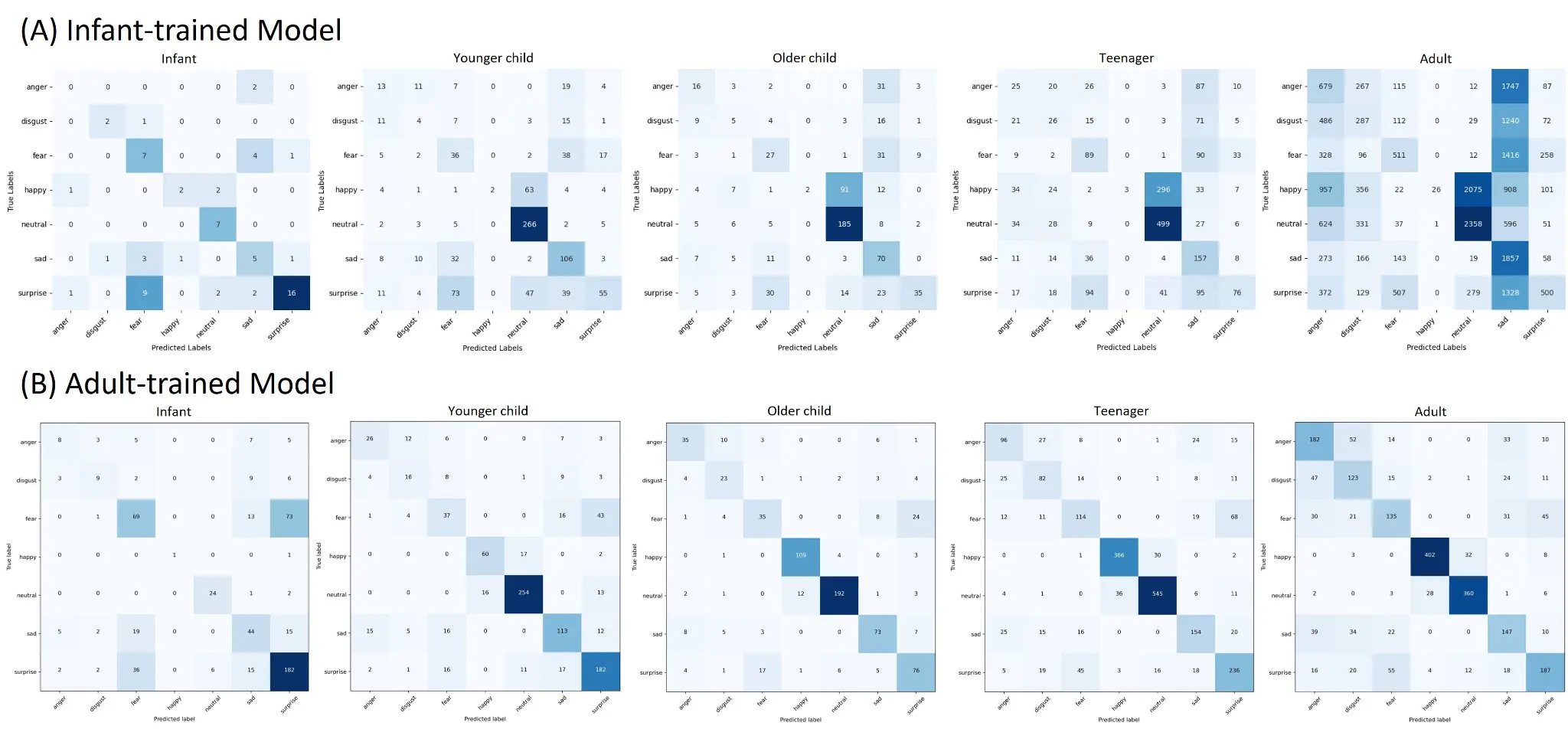

Each model was evaluated on all five age groups. The contrasting generalisation curves are the central finding of the study — toggle between models to compare the developmental trajectories.

The infant-trained model achieves its best performance on infant images (55.71%) and degrades monotonically with age, reaching only 28.49% on adults. This linear decline supports the hypothesis that infant emotional expressions carry distinctive visual features that are progressively lost or transformed as a child develops.

Notably, performance remained above chance (14.29%) across all age groups, suggesting that some expressive features are preserved across development — though these shared characteristics appear limited compared to age-specific patterns.

| AGE GROUP | INFANT MODEL | ADULT MODEL |

|---|---|---|

| Infant (1–3 yrs) | 55.71% | 59.12% |

| Younger Child (4–8) | 50.90% | 72.57% |

| Older Child (8–12) | 48.64% | 77.68% |

| Teenager (13–17) | 41.49% | 75.50% |

| Adult (18+) | 28.49% | 70.30% |

Findings & Limitations

The experiments provide computational evidence that emotional expressions evolve in a continuous and nonlinear manner. The infant model's linear decline confirms that infant facial expressions are visually distinct and become progressively more adult-like over time. The adult model's nonlinear trajectory — peaking at older children and teenagers — suggests that key features of adult-like expression emerge during late childhood and adolescence.

These findings align with developmental psychology literature suggesting that while basic expressions appear in infancy, how we express, perceive, and interpret emotion becomes more complex over time. The results offer a useful computational perspective alongside behavioural and observational studies.

Emotional labels for infant images were assigned by adults, not reflecting infants' internal states. Subtle facial movements may not map cleanly onto adult-defined categories.

Only 687 infant images across seven classes creates severe class imbalance and limits the model's ability to learn robust features.

TIF was collected in Norway with Northern European participants; AffectNet underrepresents Mandarin, Hindi, and French speakers. Cultural norms around emotional expression differ significantly.

ResNet-50 age estimation errors near group boundaries can misassign images, introducing noise into developmental comparisons.

AgeEstimationModel — ResNet-50/EfficientNet/ViT variants

Training loop with Adam, early stopping, checkpointing

Distance loss, center loss, evaluation utilities

Dataset class with augmentation pipeline

Grid search over LR, dropout, weight decay

Exploratory data analysis and dataset statistics

Inference on new images with pretrained weights

Training curves, confusion matrix, age distribution plots

Dataset CSV generation from AffectNet directory