DEEP REINFORCEMENT LEARNING · AUTONOMOUS CONTROL

GitHub ↗End-to-End RL for Autonomous Racetrack Navigation

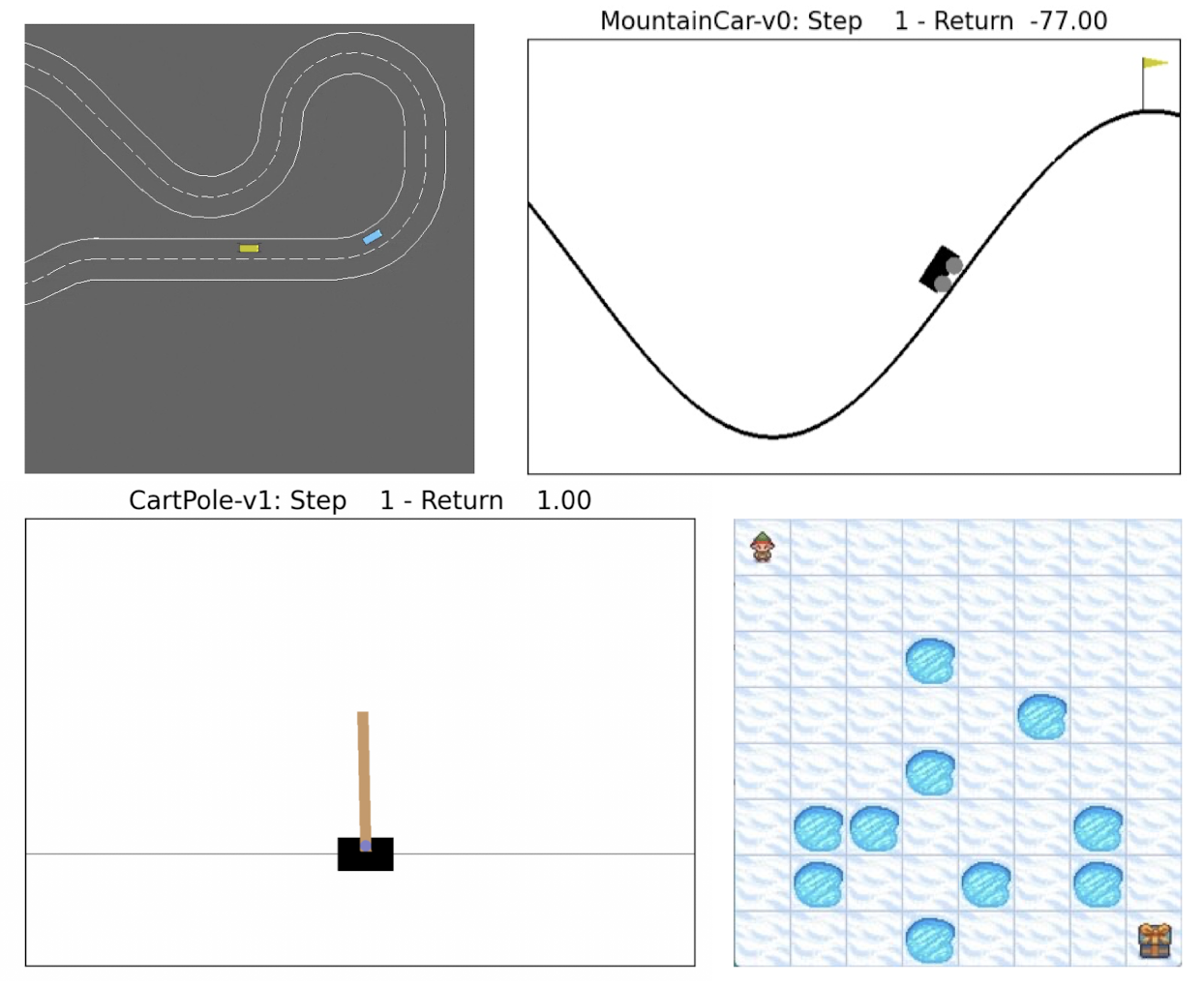

A progressive implementation of reinforcement learning — from classical dynamic programming to deep actor-critic methods — culminating in an agent that navigates a simulated racetrack with continuous steering and throttle control. Five algorithms across five exercises, implemented as coursework for the Reinforcement Learning course at the University of Edinburgh.

Learning Progression

Each exercise builds on the previous, forming a complete journey from exact dynamic programming to deep actor-critic methods for continuous control.

Value Iteration & Policy Iteration on a custom MDP. Exact solutions, full model required.

Q-Learning & Monte Carlo on FrozenLake-8x8. No model needed — learn from experience.

DQN with experience replay and target networks. Scales RL to continuous state spaces.

Actor-Critic on Racetrack-v0. First algorithm with continuous steering and throttle.

Prioritised Experience Replay, adaptive noise scheduling, orthogonal weight initialisation, and 60+ model hyperparameter sweep on Racetrack-v0.

Algorithm Deep Dives

Experimental Results

| Algorithm | Type | Environment | Key Result |

|---|---|---|---|

| Value Iteration | Dynamic Programming | Custom MDP | Optimal policy, exact convergence |

| Q-Learning (gamma=0.99) | Tabular RL | FrozenLake-8x8 | Converges in 10K episodes |

| Monte Carlo (gamma=0.99) | Tabular RL | FrozenLake-8x8 | Converges in 300K episodes |

| DQN (linear, frac=0.01) | Deep RL | CartPole-v1 | Solves within 50K steps |

| DDPG | Actor-Critic | Racetrack-v0 | Target return: 500.0 |

| Enhanced DDPG | Actor-Critic + PER | Racetrack-v0 | Mean return: 374.86 +/- 52.78 |

Key Contribution — Enhanced DDPG

Prioritised Replay with Adaptive Exploration

The Enhanced DDPG extends the baseline with three targeted improvements. Prioritised Experience Replay (PER) samples transitions proportional to their TD-error magnitude — the agent revisits the most informative experiences more often, accelerating learning on difficult transitions.

Adaptive noise scheduling starts exploration at std = 0.8 and decays over training, with periodic bursts to escape local optima. Orthogonal weight initialisation (critic gain 1.0, actor gain 0.1) improves gradient flow in early training. The best configuration from a 60+ model sweep achieved a mean return of 374.86 ± 52.78 on Racetrack-v0.

Technologies