Computer Vision ? Semantic Segmentation ? Oxford-IIIT Pet Dataset

Image Segmentation

with UNet Architectures

& Prompt-based SAM2

Four segmentation approaches -- custom UNet, autoencoder pre-training, CLIP fusion, and SAM2 prompt-based segmentation -- evaluated on the Oxford-IIIT Pet dataset. Which architecture actually learns to tell a cat from a dog?

Authors

Saravut Lin ? Ulixes Hawili

Institution

University of Edinburgh

Dataset

Oxford-IIIT Pet (7,400 images)

Best Result

SAM2 ? IoU 0.815 ? Dice 0.889

01 ? Dataset & Preprocessing

Oxford-IIIT Pet Dataset

7,400 images spanning 37 pet breeds (~200 per breed), each with pixel-level masks labelled as background (0), cat (1), dog (2), or ignore (255). The dataset presents three key challenges: a 2:1 dog-to-cat imbalance, mixed mask formats, and corrupt samples that must be filtered.

After preprocessing and class-specific augmentation (5x for cats, 2x for dogs), the final training set contains approximately 11,661 image-mask pairs at 512x512 resolution -- a balanced 1:1 cat-to-dog ratio.

Corrupt file detection

OpenCV + PIL filter to remove unreadable samples

Stratified split

20% validation, preserving class ratio, no data leakage

Aspect-ratio preserving resize

Longest-side scaling + zero-padding to 512x512

Class-specific augmentation

Spatial, elastic, pixel-level, noise, lighting, occlusion transforms

Mask integrity validation

cv2.INTER_NEAREST + label set check {0,1,2,255} after every transform

Dataset Statistics

Total images

7,400

Breeds

37

After augmentation

11,661

Resolution

512 x 512

Cat samples

~5,688

Dog samples

~5,973

Mask Classes

02 ? Architectures

Four Approaches, One Dataset

Each model represents a different hypothesis about how to learn segmentation. Expand any card to read the full explanation, key idea, and a ready-to-use talk line.

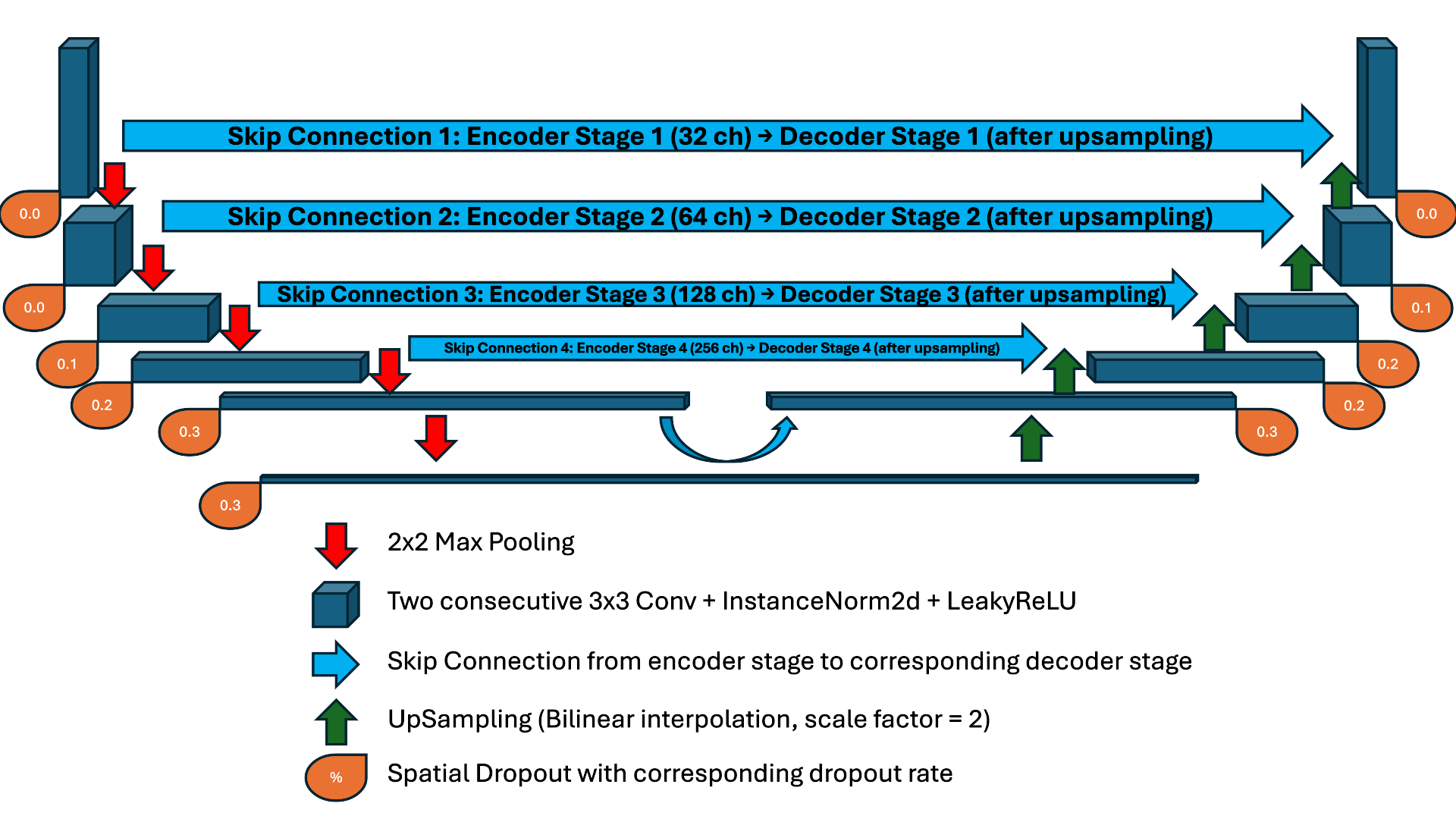

Fig. 3. Custom UNet -- 6-stage encoder-decoder with skip connections, InstanceNorm, LeakyReLU, and spatial dropout. Click to zoom.

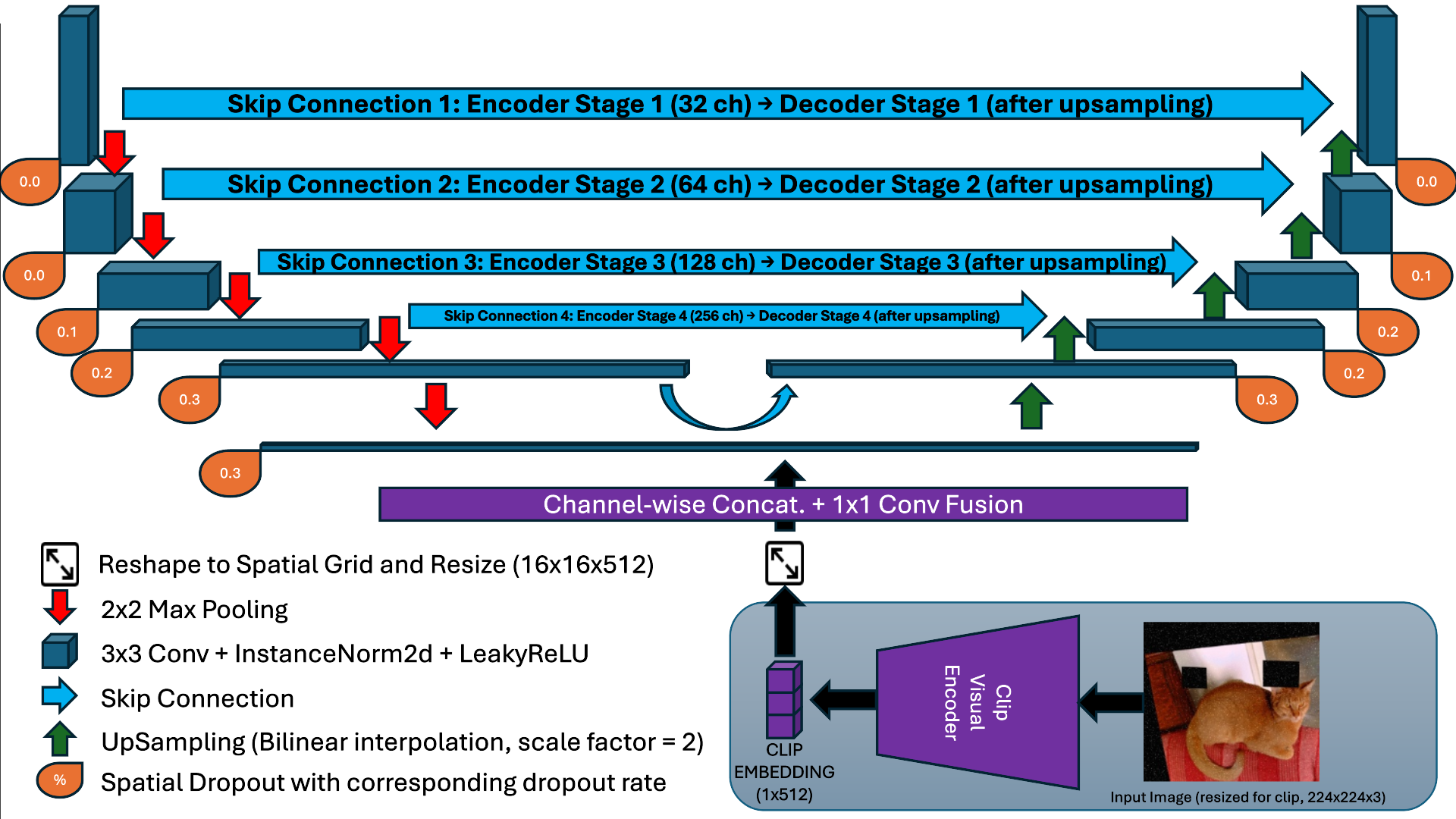

Fig. 4. CLIP-UNet fusion. CLIP injects a global 512-dim semantic vector at the bottleneck via channel-wise concat + 1x1 conv. Click to zoom.

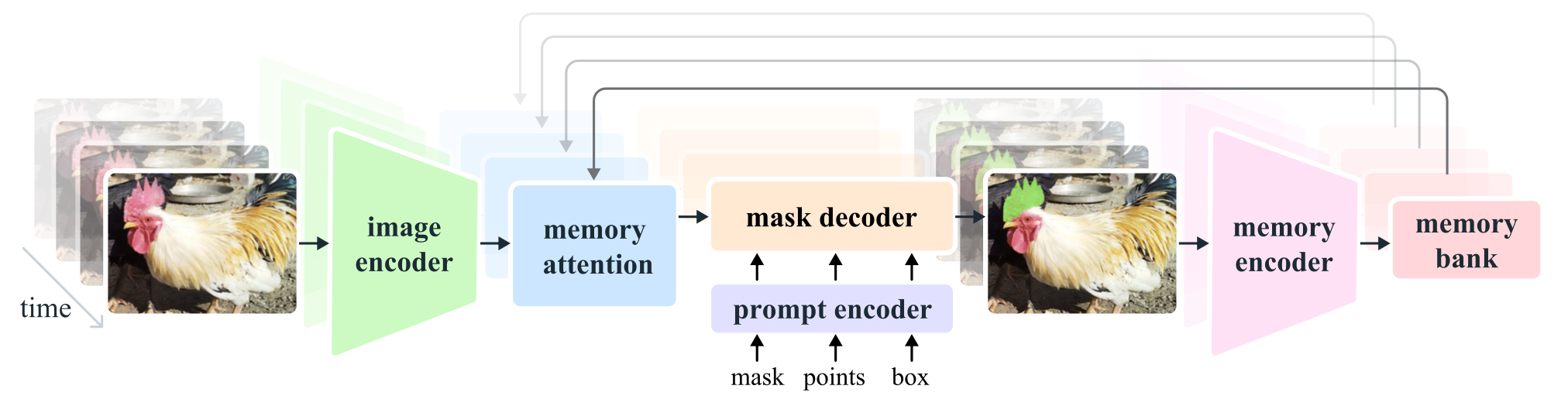

Fig. 5. SAM2 architecture -- Image Encoder, Memory Attention, Prompt Encoder, and Memory Bank for iterative refinement. Click to zoom.

03 ? Results & Evaluation

Performance Comparison

SAM2 achieves the highest scores overall (binary task), while custom UNet leads on multi-class segmentation. The autoencoder approach fails on the cat class entirely -- a clear case of objective mismatch.

mIoU by Model

Per-class Dice Score

- UNet

- CLIP+UNet

- AE+UNet

Overall Performance Summary

| Method | mIoU | mDice | Pixel Acc |

|---|---|---|---|

| SAM2 (binary) | 0.815 | 0.889 | 0.917 |

| Our UNet | 0.690 | 0.750 | 0.880 |

| CLIP + UNet | 0.600 | 0.660 | 0.820 |

| AE + UNet | 0.330 | 0.250 | 0.660 |

| Baseline | 0.330 | -- | -- |

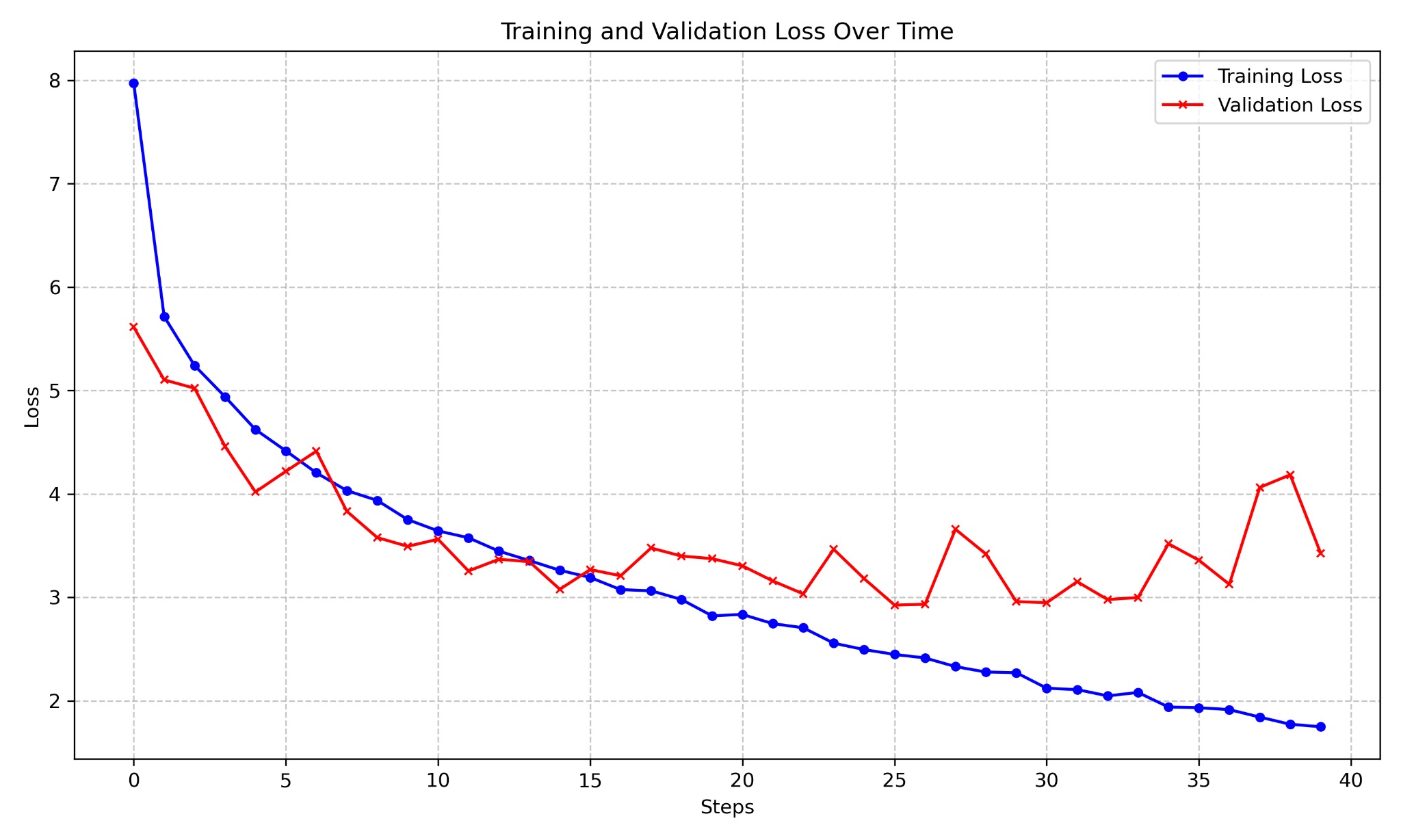

Fig. 6. SAM2 training vs. validation loss over 40 epochs. Loss drops sharply in the first 10 epochs; partial overfitting appears from epoch 15 onward. Click to zoom.

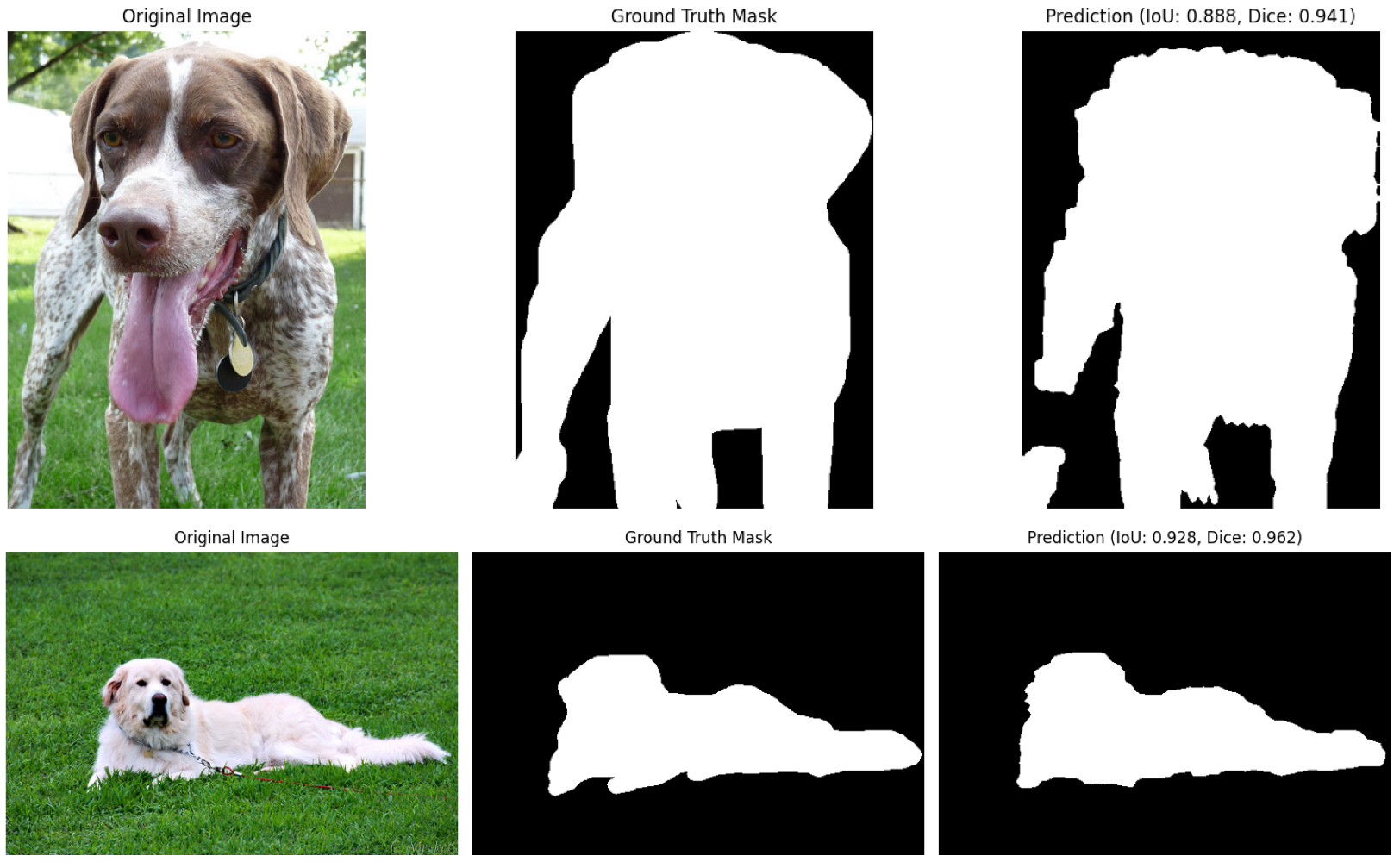

Fig. 7. Original image → ground truth mask → SAM2 prediction (IoU 0.888, Dice 0.841). SAM2 captures fine contours on tails and ears. Click to zoom.

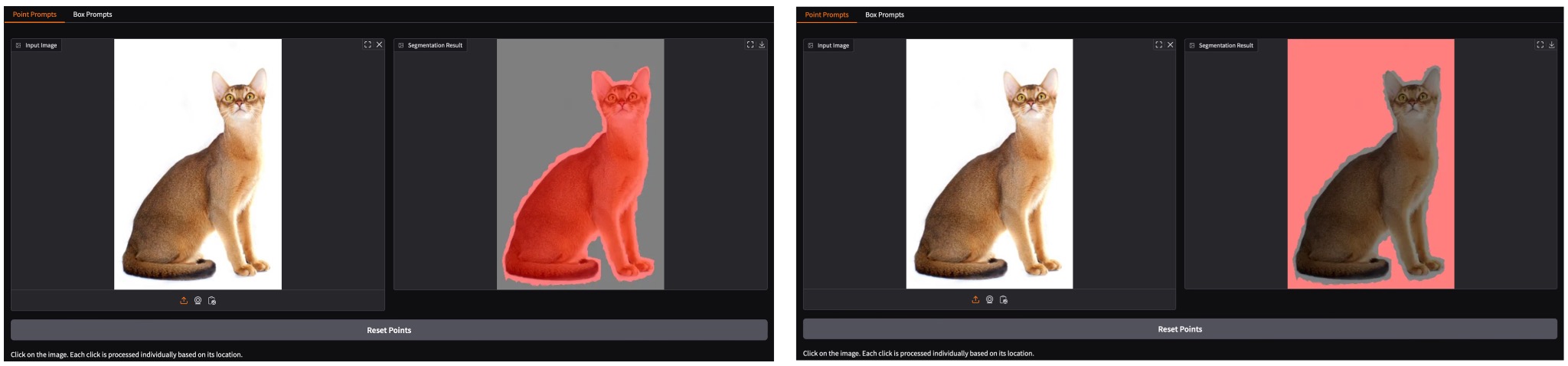

Fig. 8. Point prompt UI. Left: foreground click (red mask on pet). Right: background click (inverted mask). Click to zoom.

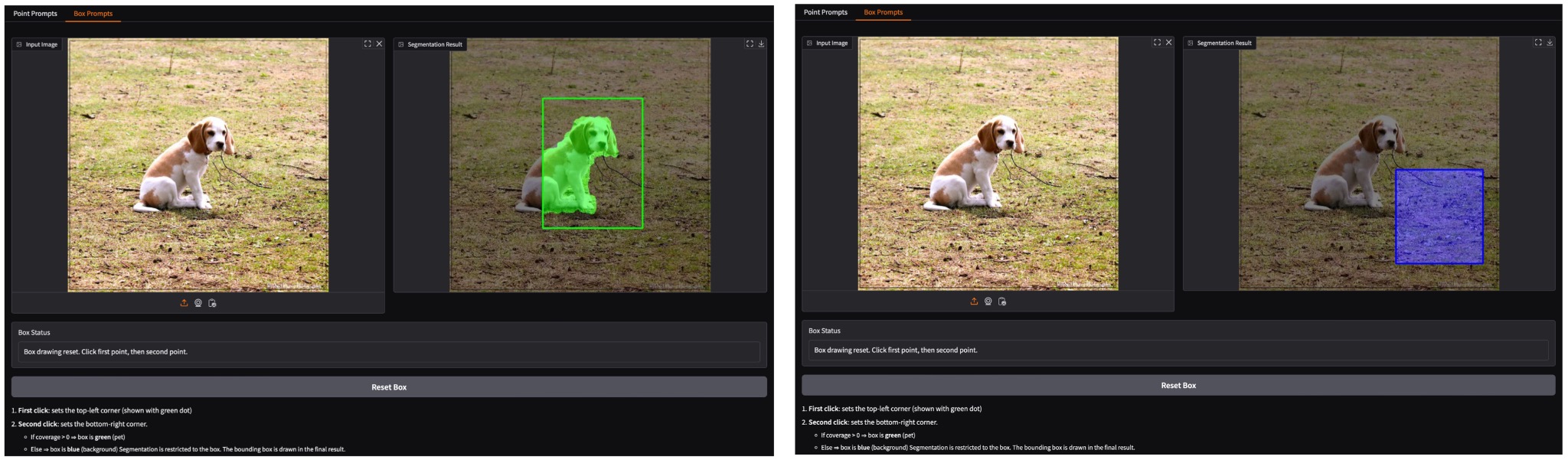

Fig. 9. Box prompt UI. Green box = foreground region (pet detected); blue box = background region (no object). Click to zoom.

Fig. 10-12. Side-by-side model predictions on three test samples (Bombay cat, American Bulldog, Miniature Pinscher). Each row: input → ground truth → prediction → error map → class confidence maps. Click to zoom.

04 ? Interactive Demo Application

Gradio UI with Point & Box Prompts

The SAM2 model is wrapped in an interactive Gradio web application. Users upload an image and interact through two prompt modes -- point clicks or bounding boxes -- to guide the segmentation. The application runs locally via PyTorch and requires no cloud API.

Point Prompts

Each click is automatically classified as foreground (pet) or background by sampling the default mask around the click location. Red overlay = foreground; inverted colour = background.

Box Prompts

Two clicks define a bounding box (top-left -> bottom-right). If the box covers pet pixels (coverage > 0), it is coloured green and the model segments within that region. Blue box = background region.

Reset Points

Users can discard all prompts at any time and start fresh, enabling iterative refinement of difficult cases.

Demo: Interactive Prompt-Based Image Segmentation Application with SAM2 and Gradio

Tech Stack

05 ? Key Findings

What We Learned

01

Custom UNet beats CLIP fusion

Despite CLIP's rich semantic prior, the naive bottleneck fusion (bilinear upsampling of a 1x1 global vector) introduces spatial artifacts. A task-specific UNet trained from scratch outperforms the CLIP-enhanced variant on all three classes.

02

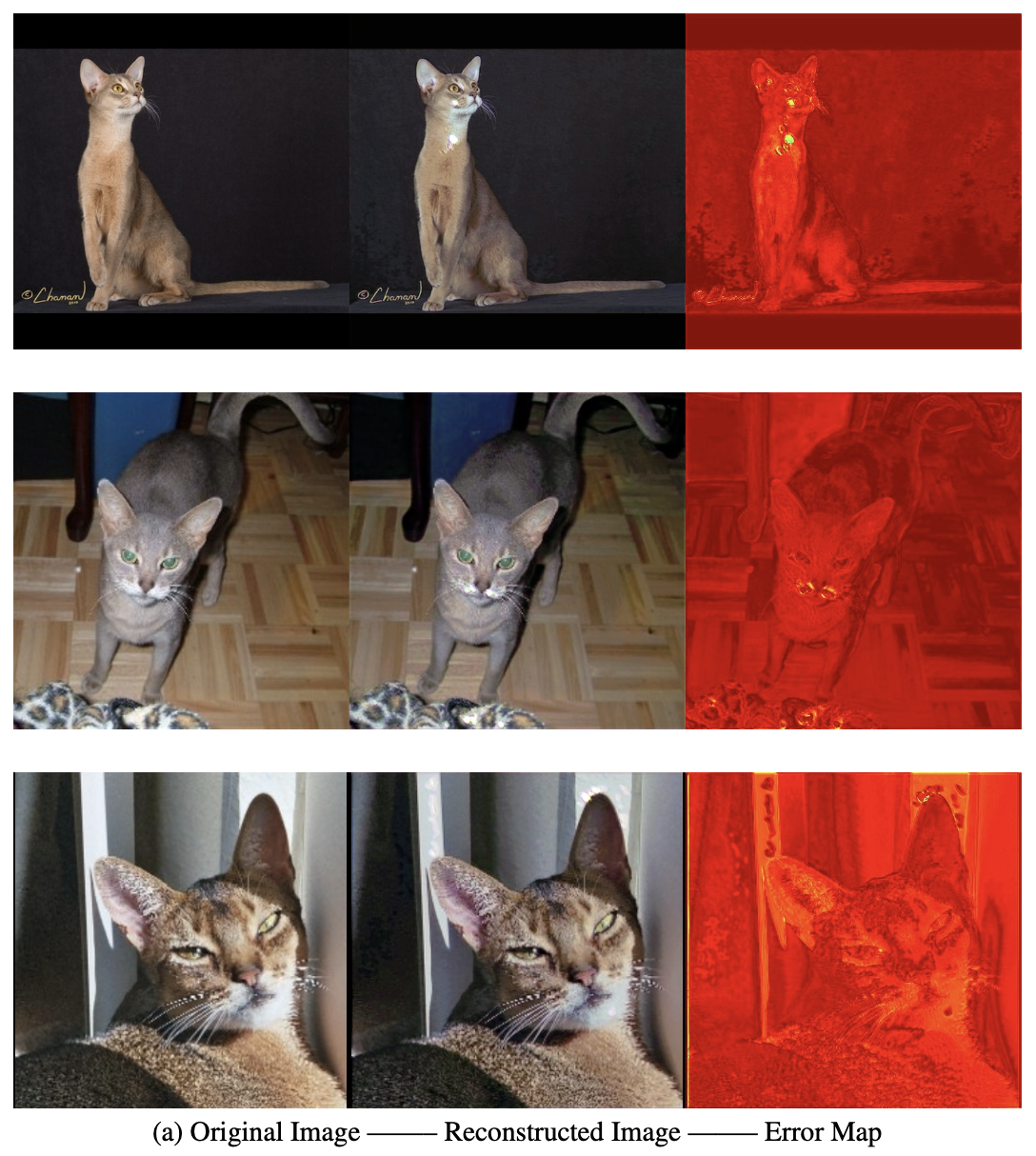

The autoencoder paradox

Reconstruction quality (SSIM = 0.88, PSNR = 28.23) does not transfer to segmentation. The autoencoder encoder learns to preserve pixel similarity, not class boundaries -- resulting in complete failure on the cat class (Dice = 0.00).

03

SAM2 excels with minimal prompts

A single point click is sufficient for SAM2 to achieve IoU = 0.815 -- a 63% improvement over the 0.5 baseline. Memory attention enables iterative refinement, making it especially effective on complex boundaries like tails and ears.

04

CPU training constraints matter

Training SAM2 from scratch with batch size 1 on CPU leads to partial overfitting from epoch 15 onward. Validation loss spikes at epochs 20, 27, and 38, suggesting the model memorises training distribution details that don't generalise.